AI Integrations (upcoming)¶

Note

This is an example/preview of upcoming Creator features, and is not representative of the final product in any shape or form

As AI models are becoming more and more powerful, and have already helped to automate various menial tasks, but also generate code, images, and other forms of data; it only makes sense that this is the next step for us.

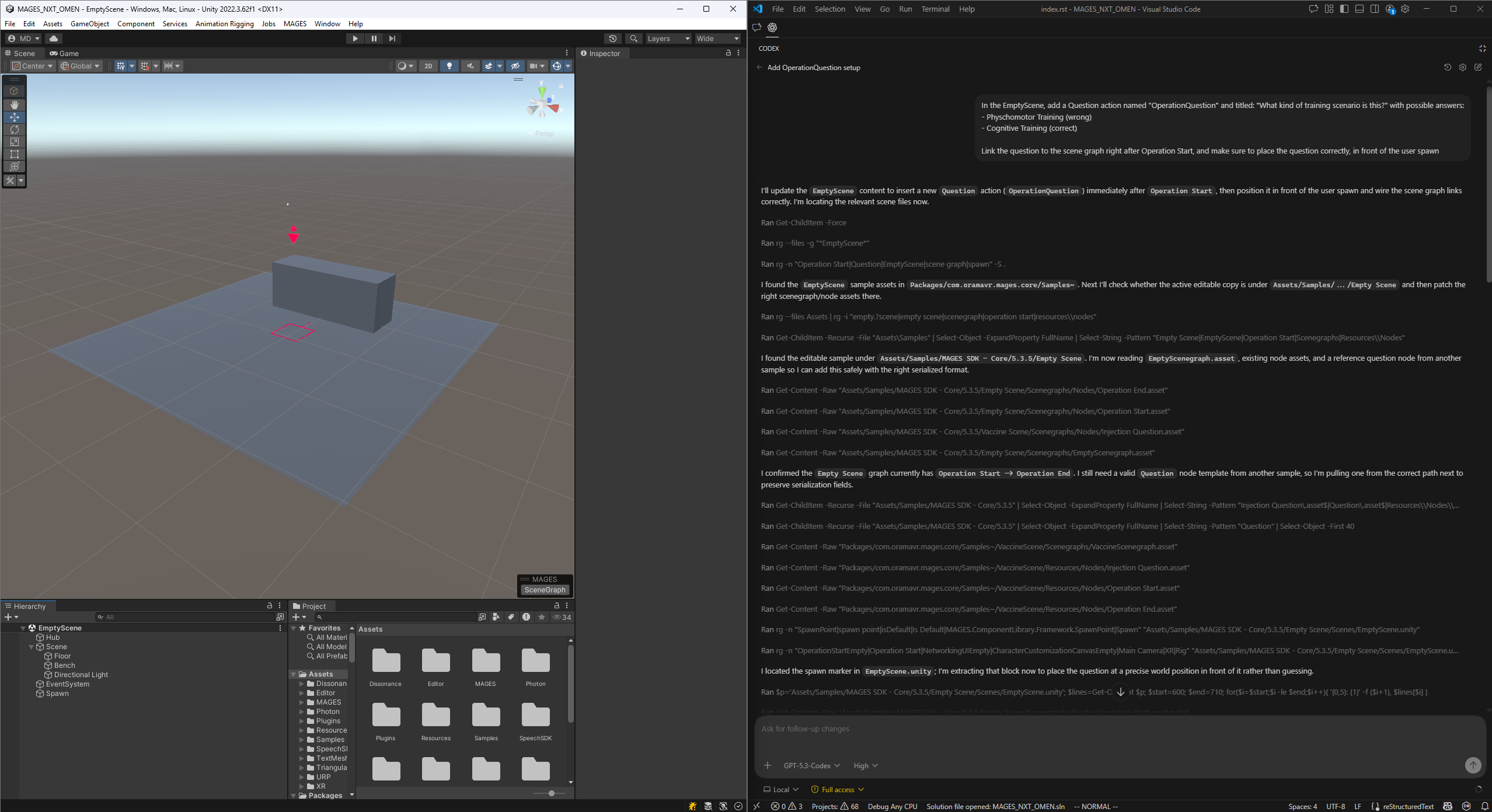

We tested a very simple scenario: Create a Question Action in the Empty Scene sample.

Setup¶

The setup was straight-forward. We used:

On a project with the latest version of Creator, with the “Empty Scene”

The Prompt¶

In the EmptyScene, add a Question action named "OperationQuestion" and titled: "What kind of training scenario is this?" with possible answers:

- Physchomotor Training (wrong)

- Cognitive Training (correct)

Link the question to the scene graph right after Operation Start, and make sure to place the question correctly, in front of the user spawn

This was ran on Unity 2022 with Creator 5.3.6, with GPT-5.3-Codex with High Reasoning

Result¶

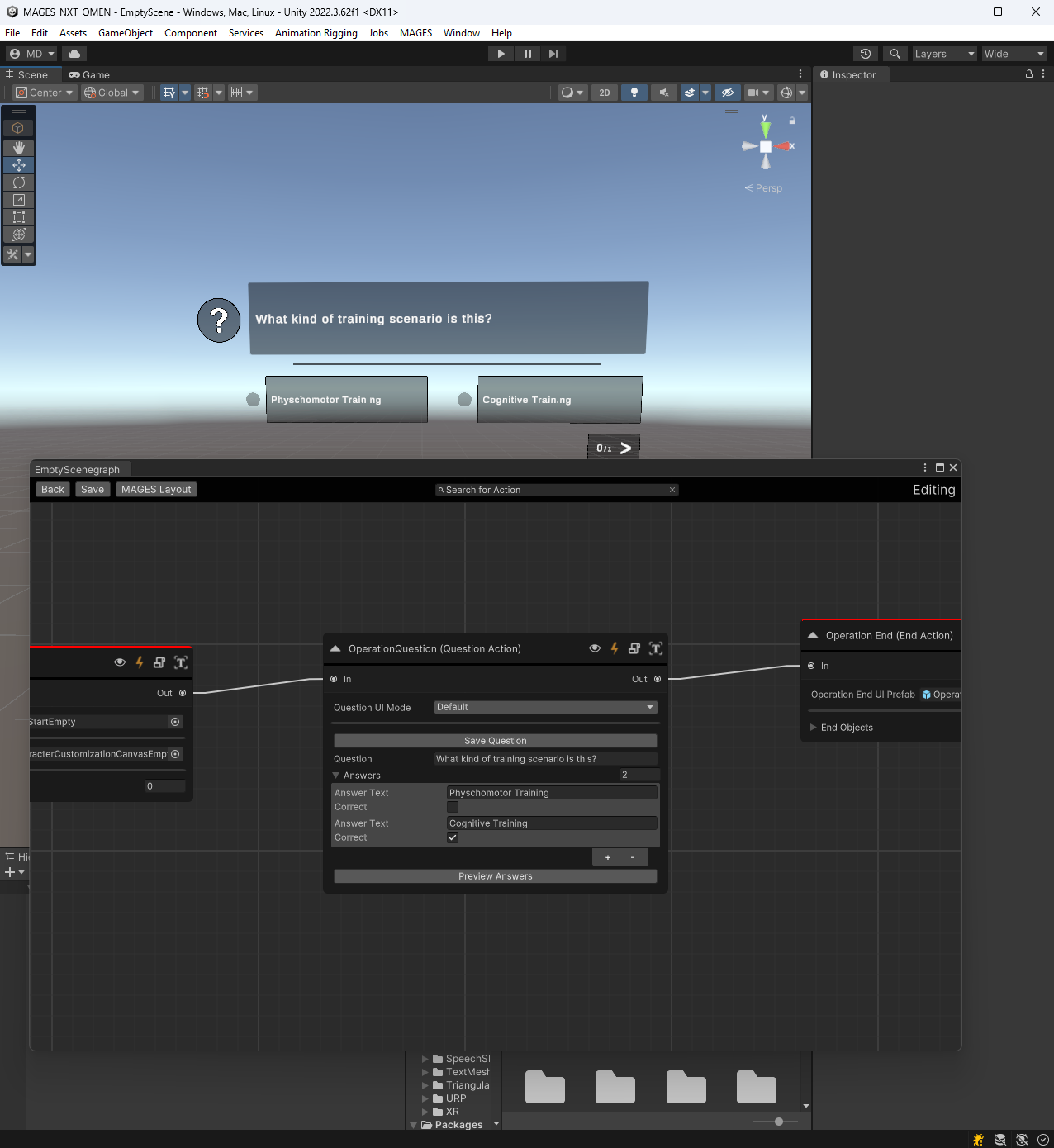

And it generated the following:

A fully working action, placed correctly in the context of the scene (in front of the user spawn point), with all of the information filled out. Instead of the X amount of clicks required for a typical action, you will soon be able to just say what you want, and then get what you want.

This is the purpose and main goal of OMEN.